Not all developers deal with big web sites or applications but many who do know this kind: The Monolith!

Some characteristics:

- Lots of code; probably hundreds of thousands of lines all together.

- Long history, relatively speaking.

- Project based development approach.

- No real describable architecture, chaotic organization.

- Hard to change, slow (and costly) to work with.

- Virtually irreplaceable.

Anatomy

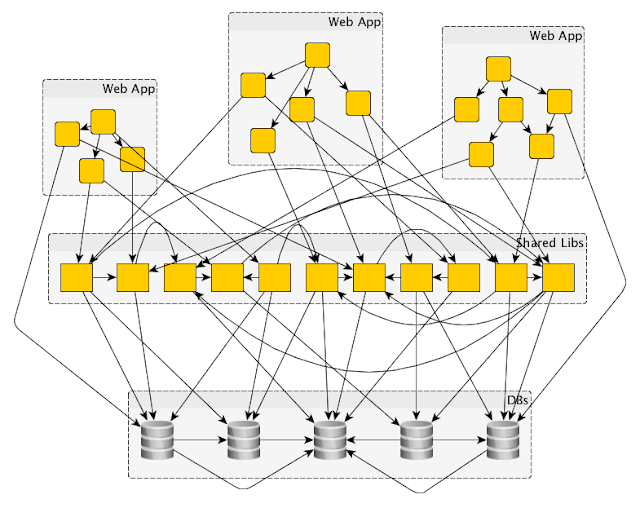

Behold, the Monolith! No, it does not have to be one website; it can be many, but all interconnected tightly. There could be all kinds of examples, but here is one, and it will demonstrate the anatomy of this monster. In some sense, it is even hard to depict this thing because there is no clear cut way to make a sense of it in the first place.

Database

Databases, many of them, typically somehow interconnected. It could be database links, shared views, remote queries, stored procedures talking to other databases. The common denominator here is that at some point in history people felt that databases are a good place for application features and functionality. In fact, often it is very easy to integrate features and data across databases and then build stored procedure layer on top of it - all in the name of "databases must have a stored procedure layer", and that "code should be close to the data, and therefore a lot of it must live on top of the database engine". DB vendors have built all kinds of stuff people can use, so crafty developers (often DBAs) get ideas how to utilize them.

Shared Data Access

Good developers try to layer their software so they create data-access layers. But since you need to build features based on all sorts of data, you can therefore connect to any database. This is acceptable to a developer who is under deadlines, and other pressures, or simply because he does not know better. We are also good citizens so we try to reuse software and depend on other such libraries to do our bidding.

Web Layer and Business Logic

Applications need to do some work, often referred as business logic. Typically, you'd create some kind of layer for this kind of behavior but the reality might be that no such thing really exists. More likely, this type of code is stuffed right in to web controls, page backing code, or anything comparable. Also, remember the good old database and stored procedures which seem to do a lot of this work, too. This code is all over the place, and so much so that you have really hard time understanding what is going on. Over time people just copy pasted bits and pieces of this functionality where ever it was needed because there are no rich models or anything resembling a layer that you could utilize.

In the end you have some kind of pancake for business logic and web layer all intertwined. There might be some attempt in Model View Controller (MVC) type of thing, or any alternative Model View View Model (MVVM) etc, but all of it is there mostly in name only. Say, you had to build a mobile view to support mobile browsers; well, good luck building it in the same web app.

How Did It Happen?

I already alluded to this but the classic

Big Ball of Mud document is a pilgrimage destination for anyone who wants to know. The Monolith is really just a version of the shanty town architecture described in BBoM.

So, it is more or less inevitable, and understandably so. Projects are a mainstay of work in any typical organization. Projects have boundaries based on some finite feature set that limits your design space. The focus of work jumps around between largely unrelated projects. People who do the work keep changing; people come in, people leave. There might be many teams, or there are really no consistent teams at all. These patterns keeps repeating year after year, project after project.

Not only that, but to the dismay of software developers, many stakeholders have no stake at all in creating good architecture, nor are they interested in paying for it. There might even be little chance that you can explain to them why they have to do it in the first place. In fact, they might even be sympathetic to your concerns but as the next project rolls in, it is clear to you that they still expect you to deal (almost exclusively) with stuff that matters to them.

How do I Survive?

Considering that you have a big web site (or any other big application) and you have this kind of problem, where do you even start? Your approaches are:

- Rewrite the entire thing

- Rewrite it piece by piece

- Surrender

The Big Rewrite

This approach often sounds attractive but unless you have solid support and business need from stakeholders and management, this is not going to happen. Usually if this approach is taken, the system is so bad that it threatens the business in some way. Or, in the happier case, the organization has ample resources and time, so the project is taken on because there is some related business opportunity, but rarely so.

Rewrites may take a long time, and are costly and risky projects. You will also have significant difficulties capturing business rules, and old functionality of a system that has been cemented in a messy and incoherent code base. Flipping a switch on an entire system is a difficult thing to do and requires a lot of strategizing and thought.

Rewrite Piece by Piece

This approach requires taking every opportunity to improve the system. You will spend significant amount of time in all your projects cleaning up things because projects are typically the only effective vehicle for this work. This will stretch all project time lines so you need management support for your work still, but you may have a better luck selling your plan because of the piece meal approach.

To avoid some undue costs, you may limit yourself to working on fixes on the parts that are core to the system or are causing otherwise constant and significant costs to maintain. How do you know what is core? It is usually a part of the system that requires constant work to support the business. This focus may shift over time, as the business shifts focus, so it is not the same thing forever!

Surrender

Some systems do not require changes often. It may not be worth the rewrite or any kind of fix campaign. You probably just want to stabilize the system to keep it going in this case. You may never get the management support that you need to do the work, either. These may be things that you do not like, but it is still a scenario that can happen.

Strategies

I will review a couple of strategies how to do a piece by piece rewrite. I think this is the most common workable scenario for big systems.

Transform from Inside Out

What I mean by this:

- Establish modularization

- Utilize layering

- Use common infrastructure and frameworks

- Use proper Object Oriented Programming. (use Domain Driven Design etc.)

All this type of work would be done inside the Monolith application to slowly make it better from inside out, so that we eventually end up with a workable application.

This may be your first stab at the problem so this is an obvious choice. I certainly tried it. I would just say that this strategy has its limitations if your system is rather large. If you are looking at a scenario like in the anatomy picture, you are not likely to win with this strategy.

The Monolith actually has inherent gravity that does not allow you to escape into a green garden where you can maintain your newly hatched good software. You may rewrite parts of your system, but you almost never can remove the old code either because of the messy interconnected nature of The Monolith. You are also still being limited by the project nature of work which does not give you the room or freedom to roam in large swaths of software to detangle and replace things. In addition, you may attempt to aggressively create 'reusable' code and libraries but forget that reusable code creates tight dependencies, which is roughly the opposite of what you really want to achieve.

- Your new code and libraries simply add more weight. It's still a monolith but now even bigger!

- You can't isolate yourself from the bad parts very effectively to escape the gravity.

Break the Monolith

I would favor this strategy because it starts to deal with the actual problem. Break off your monolith into smaller applications, that then forms the system. No matter how messy your system is under the hood, on a conceptual level, your monolith still has groups of features (as seen by the user) that go together. These are the natural fault lines between the applications that you want to split off from the Monolith.

Your challenge is to detect the fault lines, take a scalpel, and cut. When doing so, you will copy code, even duplicate databases, and it is OK. You simply want to create an app that functions largely in isolation, from the database level up. The main rules is: share as little as possible, and copy over sharing.

There are many benefits to this approach:

- Much smaller and manageable application.

- You can change it in isolation without breaking something else.

- You can assign a team to work on it.

- You can do this gradually, piece by piece.

- You can choose any technology stack for the app.

The main challenge? Integration with other parts of the system. But note how this is the exact same challenge that the monolith sought to solve! The monolith approach was simply to share code or share databases etc. But, that is a bad way to integrate big systems because you can not operate, manage or change things that are too tightly integrated. Every system has bad code, so that is not even the main point here.

Also, now you can circle back, and "Transform from Inside Out" each of the small applications that you split off. So, first split, then transform. If you have the luxury to rewrite the split off app, then you can do that, too!

Anatomy of an App

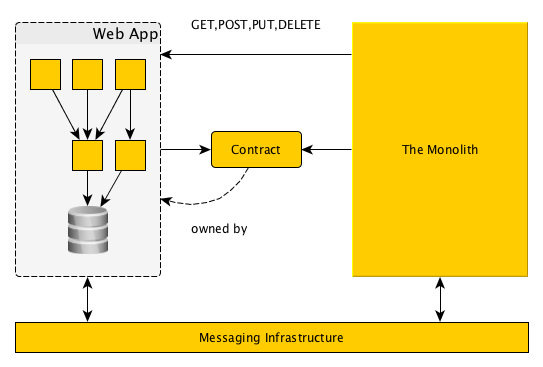

So, what should these applications look like? None of this is a big secret, but let's go over it anyway. You break off your first app from the monolith, so what should happen?

- Your app should run without the monolith; it does not care if the rest of the system runs or not, and it is happy to do whatever it needs to do without the rest.

- Your app has some data storage typically, a database if you will. The difference here is that it is not shared with any other app. This data is completely private to the app, and all the code that lives outside this app never touches this data.

- Your app integrates with the monolith or other apps by asynchronous messaging or by web level services. The integration is governed by contracts that are agreements about what goes on the wire and how to talk to the other side. Now, your app will own the contracts for the integration points that is chooses to advertise. There is no other way for another app to get access or visibility to the services.

The point of this approach is to protect the application from getting locked up in ways that prevent us from changing the innards. You do not want to sink back to the situation where you can't change your app because the way you chose to integrate things.

One final point is to not go overboard. Do not create too many micro applications, but choose bigger cohesive collection of features that go together. The size may vary, but when you start to look at a pile of code that is several thousand lines, there might be something worthy of a separate app there. Integration is not the easiest thing in the world to get right either, so do not create unnecessary work and complications by creating applications that can't really function on their own. There should not be that many integration needs if you get the size right, though that depends on the nature of the application just as well.

Monolith Conquered

Once you have taken this path, you may be looking at something like the picture below later. You have now split your big monolithic monster into manageable chunks that mostly just hum along on their own.

You will copy reference data via messaging, and integrate web features using typical web integration techniques.

Messaging

Messaging is important for several reasons. It helps you to build loosely coupled apps, which is what we are looking for. Here you have the applications fire one-way events that other applications can subscribe to. I would not favor bidirectional request-response patterns here; we can use the web for that.

For example, if you have an application that handles user profiles and login, you may fire events related to things that users do in that app.

- Account Created

- Account Closed

- Account Edited

- User logged on

- User logged out

... just to give a few examples. Pat Helland's document gives an idea what the messages should look like on the wire. The main idea is not to leak details about the internals out in your messages that bind you to other applications too tightly again. So, do not use your internal models on the wire! This is what the contracts are for. The second important thing is to "tell" what your application is doing, rather than tell some other application to do something. This is sometimes called the

Hollywood Principle or Inversion of Control.

If you then require reference data, or your application needs to react to something that another application is doing, you subscribe to the relevant events. Once you receive them, you act on the events, and do what your application chooses to do. You can store data in your internal database, or fire up processes to do work. Regardless, it is up to the app to decide how to react, and it may even send events of its own based on its internal processing.

This type of integration allows you to create loosely coupled applications, but also allows very rich interaction that often responds well to previously unseen needs.

Web Integration

Often, big web applications show information on the screen from various feature sets. Your front landing page may show some popular articles, forum threads, and other distilled user content. These views may then come from a number of applications that you have split off.

This creates an integration problem that you can solve on the web level.

- You may pull views on the server side.

- You can use Ajax to let the browser render the views.

- Or you can use some hybrid where you pull data from the server, but render on the browser.

There are different challenges to these approaches that you may have to solve, but generally, it is all doable with considerably little effort. I would favor integration in the browser if it is any way feasible because the browser has a sophisticated web page loading and caching model that avoids a lot of problems on the server side.

Try to keep all web artifacts including JavaScript and style sheets with the application that owns the web views you are serving. This way, you can make integration very painless and clean.

Web Infrastructure

A couple of words about web infrastructure you can use to build your apps and integrate it all to a big application. You may ask how to deploy such an application in pieces? Two main approaches comes to mind:

- Deploy to web container, such as Tomcat (Java) as a WAR (web archive), or IIS with .NET as virtual web apps, to build one web site.

- Use a reverse proxy to build a site from a number of servers and web containers in them.

- Combine the two so you can scale any app as you need, but let the user see just one web site.

Summary

It is possible to build reasonable size applications that mostly stand on their own, scale on their own, and you do not suffer from the monolith mess. You can assign teams to the apps, and you can fix the internals in separation from other applications. Your system stays changeable and you can respond to the needs of the business.

Note that you can do all of this without sharing code between your apps, and you can choose different technology stacks, programming languages, and solutions. All of it can be made to work together and managed with less headaches.

You can take this approach and apply it over time, little by little. You are not forced to do the big rewrite, or take on all problems on all at the same time.

So, happy monolith slaying!